The history of CUDA parts, particularly in the context of automotive performance and customization, traces back to the mid-1960s when Chrysler introduced the Plymouth Barracuda. The Barracuda quickly gained popularity among car enthusiasts for its sporty design and powerful engine options. Over the years, various aftermarket companies began producing performance parts specifically designed for the Barracuda, enhancing its speed, handling, and overall performance. As the muscle car era flourished, so did the demand for high-performance components, leading to a thriving market for CUDA parts that included everything from exhaust systems to suspension upgrades. Today, the legacy of CUDA parts continues, with a dedicated community of restorers and modifiers who seek to preserve and enhance these classic vehicles. **Brief Answer:** The history of CUDA parts began with the introduction of the Plymouth Barracuda in the 1960s, which sparked a demand for aftermarket performance enhancements. Over the years, this led to a vibrant market for parts aimed at improving the speed and handling of these classic muscle cars, a trend that persists today among enthusiasts and restorers.

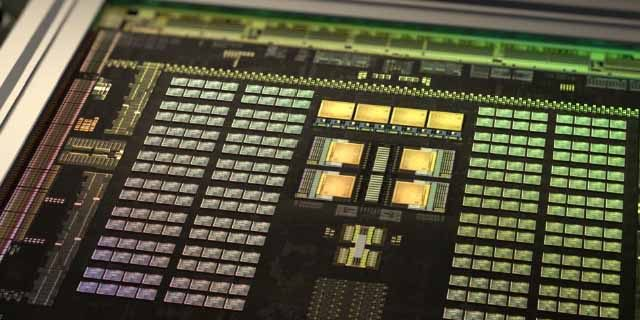

CUDA (Compute Unified Device Architecture) parts, primarily used in NVIDIA GPUs for parallel computing, offer several advantages and disadvantages. One significant advantage is their ability to accelerate computational tasks by leveraging the massive parallel processing power of GPUs, which can lead to substantial performance improvements in applications such as deep learning, scientific simulations, and image processing. Additionally, CUDA provides a rich ecosystem of libraries and tools that facilitate development and optimization. However, there are also disadvantages, including the steep learning curve associated with mastering CUDA programming, potential compatibility issues with non-NVIDIA hardware, and the risk of code becoming tightly coupled to specific GPU architectures, which may hinder portability and future scalability. Overall, while CUDA parts can significantly enhance performance for certain applications, developers must weigh these benefits against the challenges they present. **Brief Answer:** CUDA parts offer high performance through parallel processing, benefiting applications like deep learning, but come with a steep learning curve, compatibility issues, and potential portability concerns.

The challenges of CUDA (Compute Unified Device Architecture) parts primarily revolve around compatibility, performance optimization, and resource management. As CUDA is designed to leverage the parallel processing power of NVIDIA GPUs, developers often face difficulties in ensuring that their code efficiently utilizes the hardware capabilities while maintaining compatibility across different GPU architectures. Additionally, debugging and profiling CUDA applications can be complex due to the asynchronous nature of GPU execution, which may lead to issues like race conditions or memory leaks. Furthermore, managing memory between host (CPU) and device (GPU) can introduce overhead and complicate data transfer processes, making it essential for developers to optimize their algorithms and memory usage effectively. **Brief Answer:** The challenges of CUDA parts include compatibility across GPU architectures, performance optimization, complex debugging, and efficient memory management between host and device, all of which require careful consideration to fully leverage GPU capabilities.

If you're looking to find talent or assistance related to CUDA parts, there are several avenues you can explore. Engaging with online communities such as forums, social media groups, and professional networks dedicated to GPU programming and CUDA development can connect you with skilled individuals who have experience in this area. Additionally, platforms like LinkedIn, GitHub, and specialized job boards can help you locate professionals with expertise in CUDA technology. For more immediate support, consider reaching out to educational institutions or training programs that focus on parallel computing and GPU architectures, as they often have resources or connections to talented individuals eager to collaborate or provide assistance. **Brief Answer:** To find talent or help with CUDA parts, explore online forums, professional networks, and job boards, or connect with educational institutions specializing in GPU programming.

Easiio stands at the forefront of technological innovation, offering a comprehensive suite of software development services tailored to meet the demands of today's digital landscape. Our expertise spans across advanced domains such as Machine Learning, Neural Networks, Blockchain, Cryptocurrency, Large Language Model (LLM) applications, and sophisticated algorithms. By leveraging these cutting-edge technologies, Easiio crafts bespoke solutions that drive business success and efficiency. To explore our offerings or to initiate a service request, we invite you to visit our software development page.

TEL:866-460-7666

EMAIL:contact@easiio.com

ADD.:11501 Dublin Blvd. Suite 200, Dublin, CA, 94568